A project of the Robotics 2017 class of the School of Information Science and Technology (SIST) of ShanghaiTech University.

Course Instructor: Prof. Sören Schwertfeger; Project Advisor: Prof. Andre Rosendo

Zhu Wangshu, She Xinghao and Chen Jinji

Introduction

Our project is about training Jackal robot to learn how to avoid obstacles in a confined space. The project will use neural network and genetic algorithm with selection, crossover, and mutation to evolve the Jackal robot.

We use the distance from three directions and put them into the simple neural network to output to the Jackal robot. By letting Jackal try different trajectories and directions, and then find the perfect route, iterative learning with genetic algorithm, so as to achieve the perfect obstacle avoidance.This project can have a wide range of applications, such as auto driving, such as sweeping robots, and we can also see Jackal becoming more and more intelligent in continuous learning, which is very interesting.

Keywords: Jackal robot, neural network, genetic algorithm

System Description

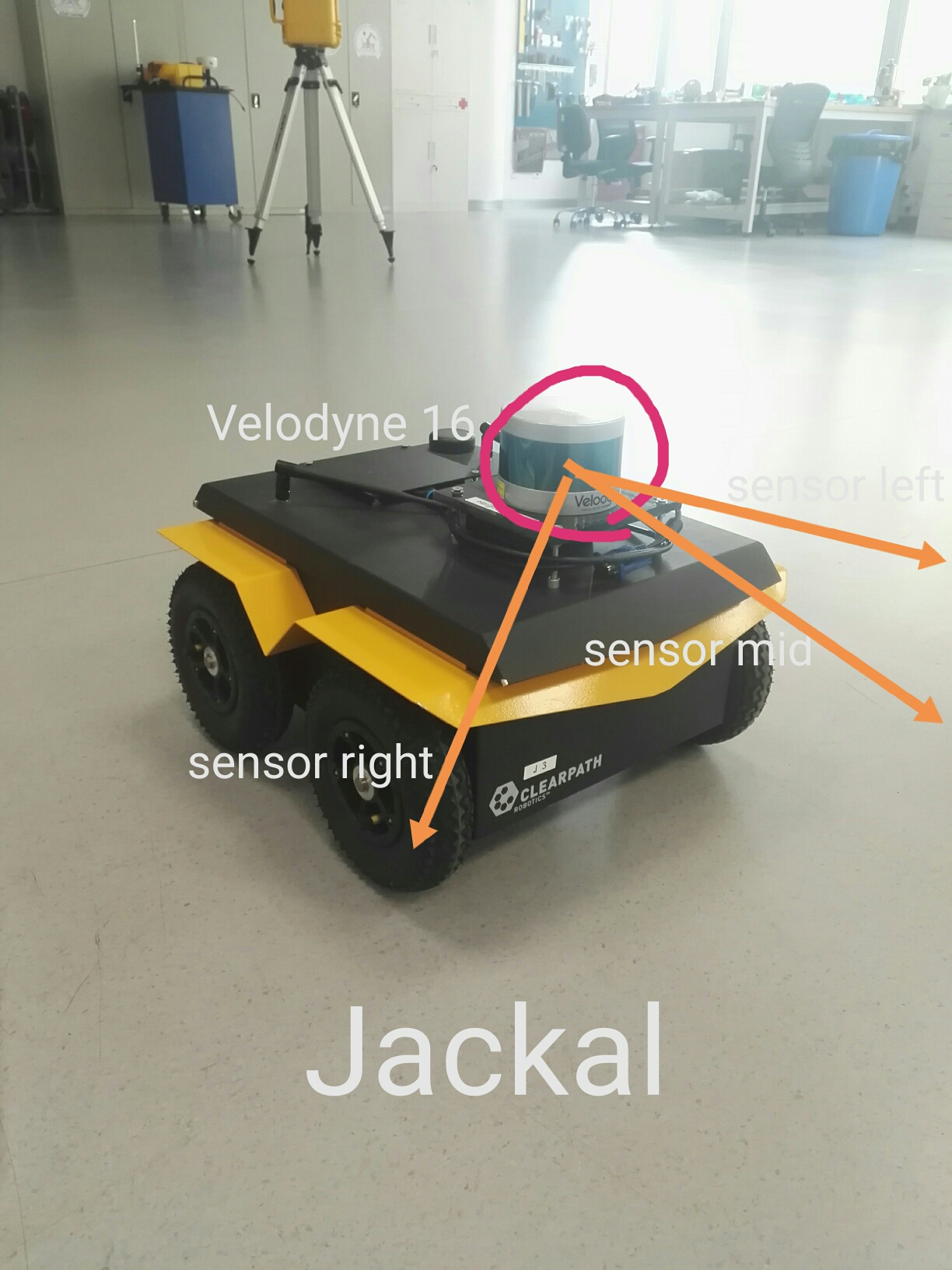

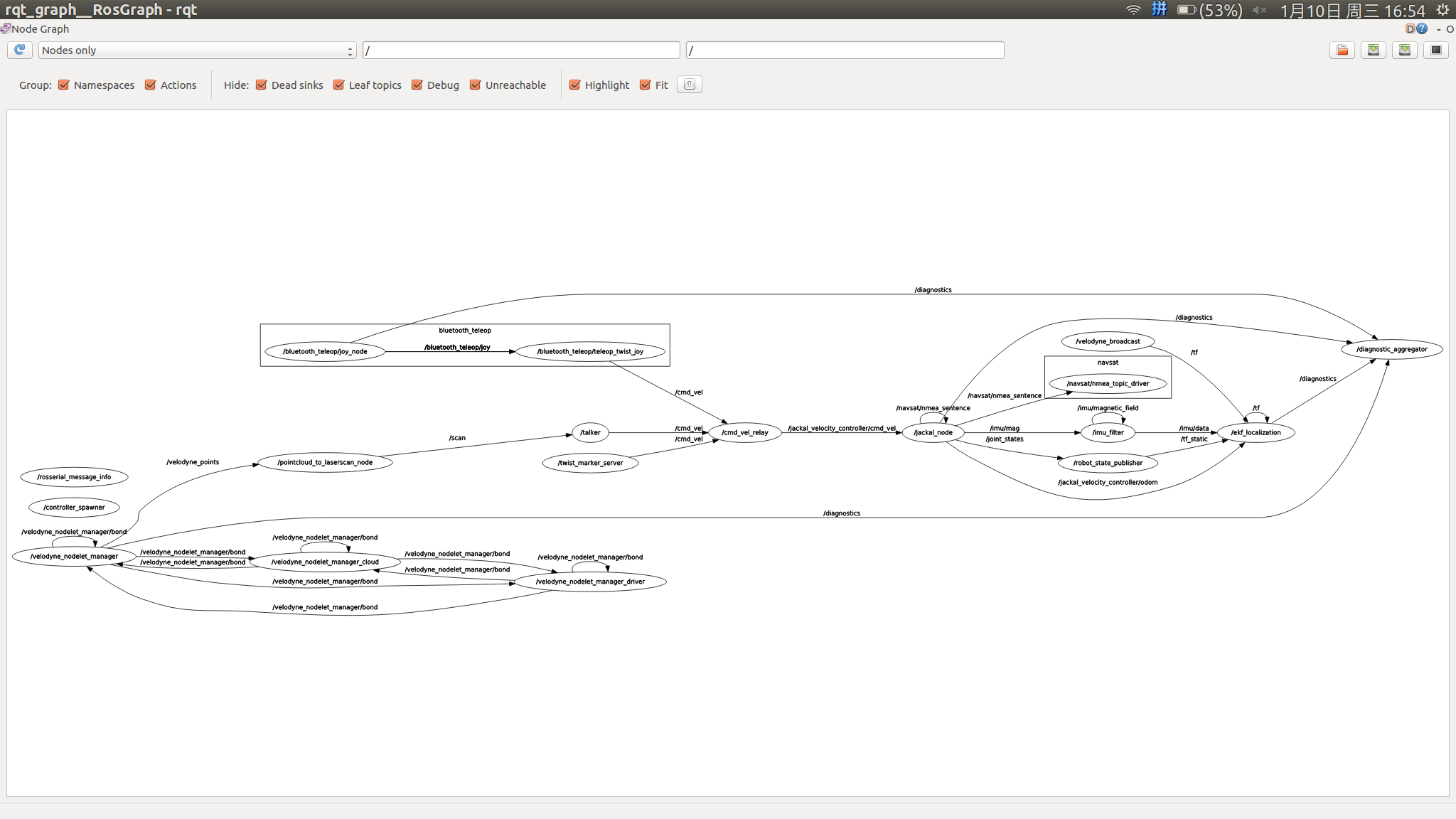

Firstly we have the pointcloud2 data from velodyne vlp-16 equipped on Jackal Robot. By the pointcloud to laserscan node we convert the pointcloud2 data to the laserscan data so we can easily get the sensor data of three different directions:in front of Jackal, left ahead and right ahead.

Fig.1: Jackal with Velodyne

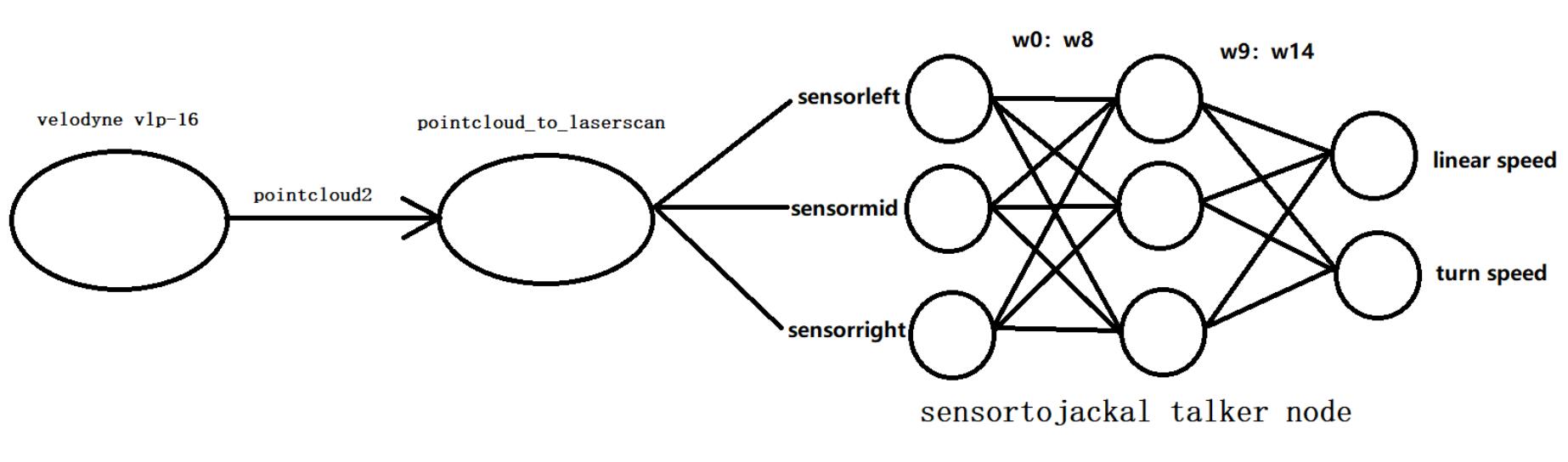

Then we follow the outline of the Evolutionary Algorithm. We define each generation is a set of six individuals. For each individual we first randomly get fifteen weights. Use these fifteen weights and three values from laser scanner (after some processing)to linearly combine and output to the linear speed and turn speed.

Fig.2: Neural network

For each individual let the Jackal run until 20 seconds or it is too close to the obstacle. And record how far it can run as the fitness point. After six individuals have run the trial and they all get the fitness point. We will generate the next generation as following rules. The first two individuals are the best two in the former generation (which have two biggest fitness points). The next two individuals are generated by crossover which means each one has half of the weights from the best one and half from the second best one. The next two individuals are generated by mutation which means each one most of the weights from the best one and some random values. Repeat to run the new generation as the first generation continually. At last we may get a good gene ( weights) adopting to the environment we made.

Fig.3: System Structure

Results

We put some boxes or some other obstacles in STAR Lab. Then let Jackal run and learn in this arena. A successful system can be go out of the arena with ” full speed” and without crashing.

Fig.4: Jackal in arena

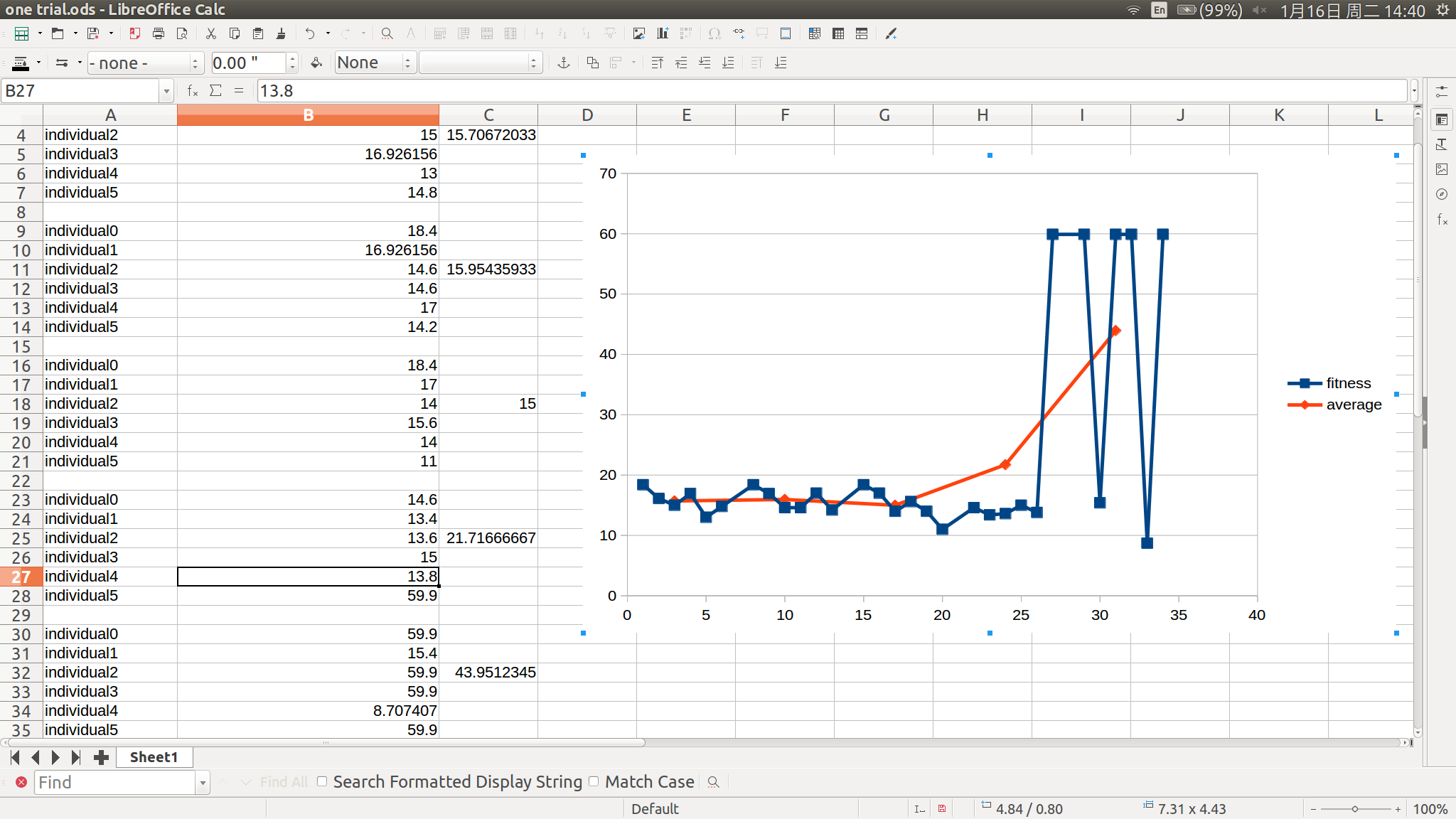

Up to now the Jackal can turn left when meeting the corner and avoid some big obstacles. And it could go out in average 2.5 generation in all trials. And with the genetic algorithm we could see the improvement of Jackal.

Fig.5: A typical trial